(I’m increasingly starting to think this is not sensible, but I’m struggling to find someone I can chat to about it… OpenStack is too enterprise, like a heavy “Java” thing where I need a just works “Python” thing…). Over the last couple of weeks I’ve been circling, but failing to make much actual progress on using, OpenStack as a platform for making self-serve OU hosted VMs available to students. Author Tony Hirst Posted on JCategories Anything you want Exposing Multiple Services Via a Single http Port Using Jupyter nbserverproxy

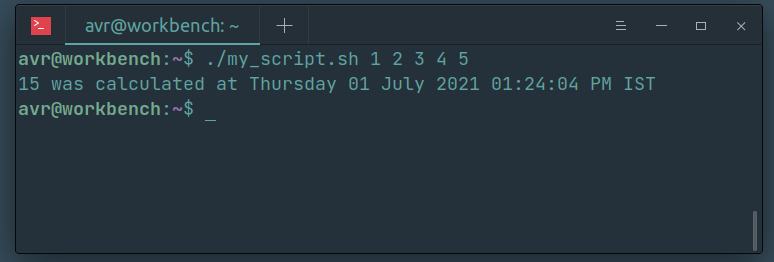

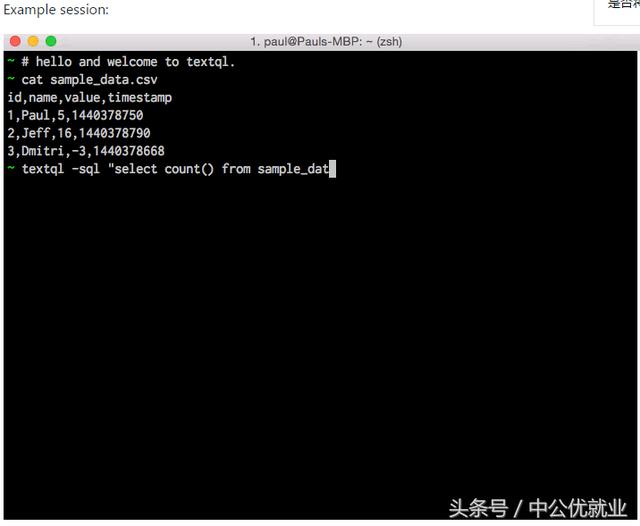

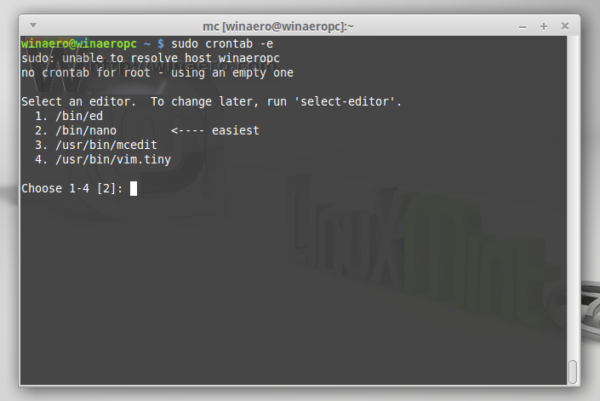

PS to do: scheduling things automatically timezone handling, python-crontab, timezonefinder. PS by the by, to launch a really simple webserver on the remote machine, install flask ( pip3 install uwsgi flask): #webdemo.py Then on local machine (type exit to close the ssh session), copy the zip file from the remote droplet back to the local host: When we’re done, zip the files that we saved inside the droplet: If we leave the droplet running, the datagrabber should run over the desired periods and save the data inside the droplet. Or to start at 11.45am on 28/7 for half an hour, something like:Ĥ5 11 28 7 * timeout 30m python3 /root/datagrab.py To start at the top of hour at 5am (droplet local time) on 28th July, for example, and run for 5 hours:Ġ 5 28 7 * timeout 5h python3 datagrab.py Then just to make sure:Ĭheck the local time of the droplet just to make sure we know how local time in the droplet compares to our local time: Timedatectl set-timezone Australia/SydneyĪnd log back in when it’s had time to reboot. For example, to set the timezone to Sydney local time: To simplify setting cron jobs, it makes sense to reset the server time to the local timezone. Instead, we can start it at a particular time as a cron job, and run it under timeout to stop it after a certain amount of time. Scp datalogger.py script probably doesn’t need to run all the time. Ctrl-x and save to get out of the nano editor.Īlternatively, from a pre-existing data logger script on your local machine, copy into the droplet using scp. With open('.txt'.format(d,ts),'w') as outfile: If 'status' in x and x='Competing': competing=True Then write a simple logger script, such as: #datagrab.py Ssh packages so we can find required packages:Ĭreate a script to grab the data if we are logging into a directory, make dure we create it: Now we should be able to log in without a password directly over ssh:

Ssh-copy-id this route, I had to reset the droplet password first.) Ssh-rsa AAAA_RANDOM/STUFF_fZ the SSH key content ( ssh-rsa AAAA_RANDOM/STUFF_fZ) to the droplet with the Name ( if the droplet is already created, then: On the local machine, get your public key: We can now add our public key to the droplet as an ssh key. To set up the local ssh certificate to simplify login to the Droplet (from a Mac): You can log in to the droplet using a web terminal or via ssh ( Digital Ocean droplet – Connect with SSH). You can get some free Digital Ocean credit by signing up for the Github education pack you get a smaller amount of credit ($10) if you sign up with my affiliate link – sign up for Digital Ocean and get $10 credit – and then if you end up spending $25 or more of your own cash on that account, I get a $25 h/t. A quick way to do this is to create a simple Digital Ocean droplet:Ī simple Linux box with the minimum spec, such as a min. Create a Remote Server on Digital Oceanįirst, we need a server somewhere. A quick recipe for grabbing live race data, in this case GPS data from WRC live maps.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed